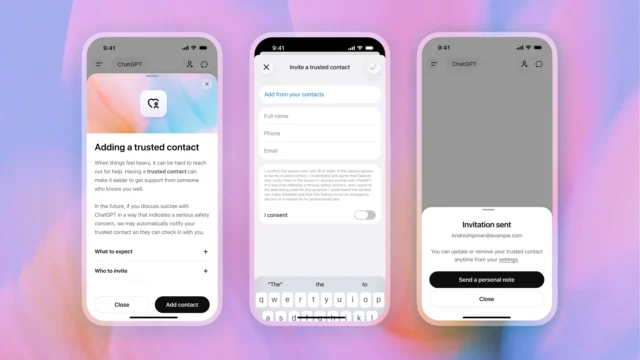

OpenAI is rolling out Trusted Contact, an optional ChatGPT safety feature that lets adults nominate one person who may be notified if the system detects a serious self-harm concern.

The setup is meant to be deliberate, not automatic in the casual sense. Users can add one adult as a trusted contact through ChatGPT settings, and that person has to accept the invitation before the feature becomes active. If the system later flags a conversation as potentially serious, ChatGPT tells the user a notification may be sent and encourages them to reach out directly first.

OpenAI says a small team of specially trained reviewers checks the situation before any alert goes out. If they agree there may be a serious safety concern, the trusted contact gets a limited notification by email, text message or in-app message. The company says the alert does not include chat transcripts or conversation details.

This is not a replacement for emergency services, therapy or crisis hotlines. It is an extra connection point.

That distinction matters. AI tools are increasingly being used for emotional support, which means companies are under pressure to build safeguards that do more than display generic warnings. Trusted Contact tries to bridge the gap between an on-screen safety prompt and a real person who can check in.

OpenAI says the feature was developed with clinicians, researchers and mental health organizations, and it points to social connection as one of the protective factors that can reduce suicide risk. Users can remove or change their trusted contact later, and the trusted contact can remove themselves too.

If you or someone nearby is in immediate danger, call emergency services. In the United States, you can call or text 988 for the Suicide & Crisis Lifeline.